In this post, we’ll delve into a particular kind of classifier called naive Bayes classifiers. These are methods that rely on Bayes’ theorem and the naive assumption that every pair of features is conditionally independent given a class label. If this doesn’t make sense to you, keep reading!

As a toy example, we’ll use the well-known iris dataset (CC BY 4.0 license) and a specific kind of naive Bayes classifier called Gaussian Naive Bayes classifier. Remember that the iris dataset is composed of 4 numerical features and the target can be any of 3 types of iris flower (setosa, versicolor, virginica).

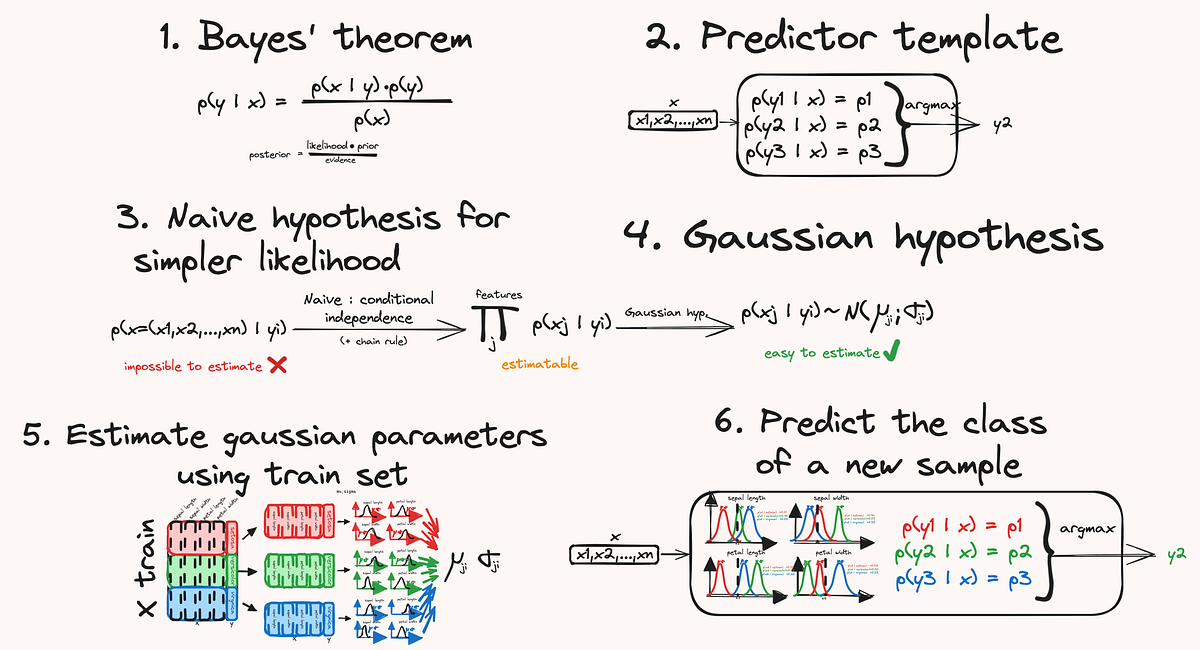

We’ll decompose the method into the following steps:

- Reviewing the Bayes theorem: this theorem provides the mathematical formula that allows us to estimate the probability that a given sample belongs to any class.

- We can create a classifier, a tool that returns a predicted class for an input sample, by comparing the probability that this sample belongs to a class, for all classes.

- Using the chain rule and the conditional independence hypothesis, we can simplify the probability formula.

- Then to be able to compute the probabilities, we use another assumption: that the feature distributions are Gaussian.

- Using a training set, we can estimate the parameters of those Gaussian distributions.

- Finally, we have all the tools we need to predict a class for a new sample.

I have plenty of new posts like this one incoming; remember to subscribe!

Bayes’ theorem is a probability theorem that states the following:

- P(A|B) is the conditional probability that A is true (or A happens) given (or knowing) that B is true (or B happened) — also called the posterior probability of A given B (posterior: we updated the probability that A is true after we know B is true).