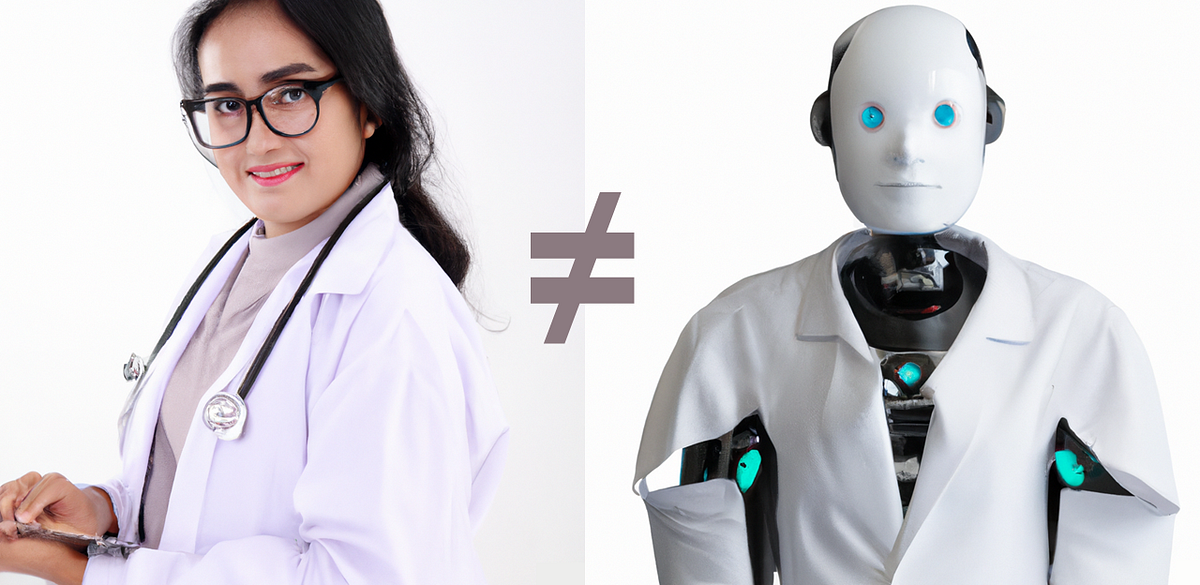

Last year, ChatGPT passed the US Medical Licensing Exam and was reported to be “more empathetic” than real doctors. ChatGPT currently has around 180 million users; if a mere 10% of them have asked ChatGPT a medical question, that’s already a population two times larger than New York City using ChatGPT like a doctor. There’s an ongoing explosion of medical chatbot startups building thin wrappers around ChatGPT to dole out medical advice. But ChatGPT is not a doctor, and using ChatGPT for medical advice is not only against OpenAI’s Usage policies, it can be dangerous.

In this article, I identify four key problems with using existing general-purpose chatbots to answer patient-posed medical questions. I provide examples of each problem using real conversations with ChatGPT. I also explain why building a chatbot that can safely answer patient-posed questions is completely different than building a chatbot that can answer USMLE questions. Finally, I describe steps that everyone can take — patients, entrepreneurs, doctors, and companies like OpenAI — to make chatbots medically safer.

Notes

For readability I use the term “ChatGPT,” but the article applies to all publicly available general-purpose large language models (LLMs), including ChatGPT, GPT-4, Llama2, Gemini, and others. A few LLMs specifically designed for medicine do exist, like Med-PaLM; this article is not about those models. I’m focused here on on general-purpose chatbots because (a) they have the most users; (b) they’re easy to access; and (c) many patients are already using them for medical advice.

In the chats with ChatGPT, I provide verbatim quotes of ChatGPT’s response, with ellipses […] to indicate material that was left out for brevity. I never left out anything that would’ve changed my assessment of ChatGPT’s response. For completeness, the full chat transcripts are provided in a Word document attached to the end of this article. The words “Patient:” and “ChatGPT:” are dialogue tags and were added afterwards for clarity. These dialogue tags were not part of the prompts or responses.