How the DSPy framework solves the fragility problem in LLM-based applications by replacing prompting with programming and compiling

Currently, building applications using large language models (LLMs) can be not only complex but also fragile. Typical pipelines are often implemented using prompts, which are hand-crafted through trial and error because LLMs are sensitive to how they are prompted. Thus, when you change a piece in your pipeline, such as the LLM or your data, you will likely weaken its performance — unless you adapt the prompt (or fine-tuning steps).

When you change a piece in your pipeline, such as the LLM or your data, you will likely weaken its performance…

DSPy [1] is a framework that aims to solve the fragility problem in language model (LM)-based applications by prioritizing programming over prompting. It allows you to recompile the entire pipeline to optimize it to your specific task — instead of repeating manual rounds of prompt engineering — whenever you change a component.

Although the paper [1] on the framework was already published in October 2023, I only recently learned about it. After just watching one video (“DSPy Explained!” by Connor Shorten), I could already understand why the developer community is so excited about DSPy!

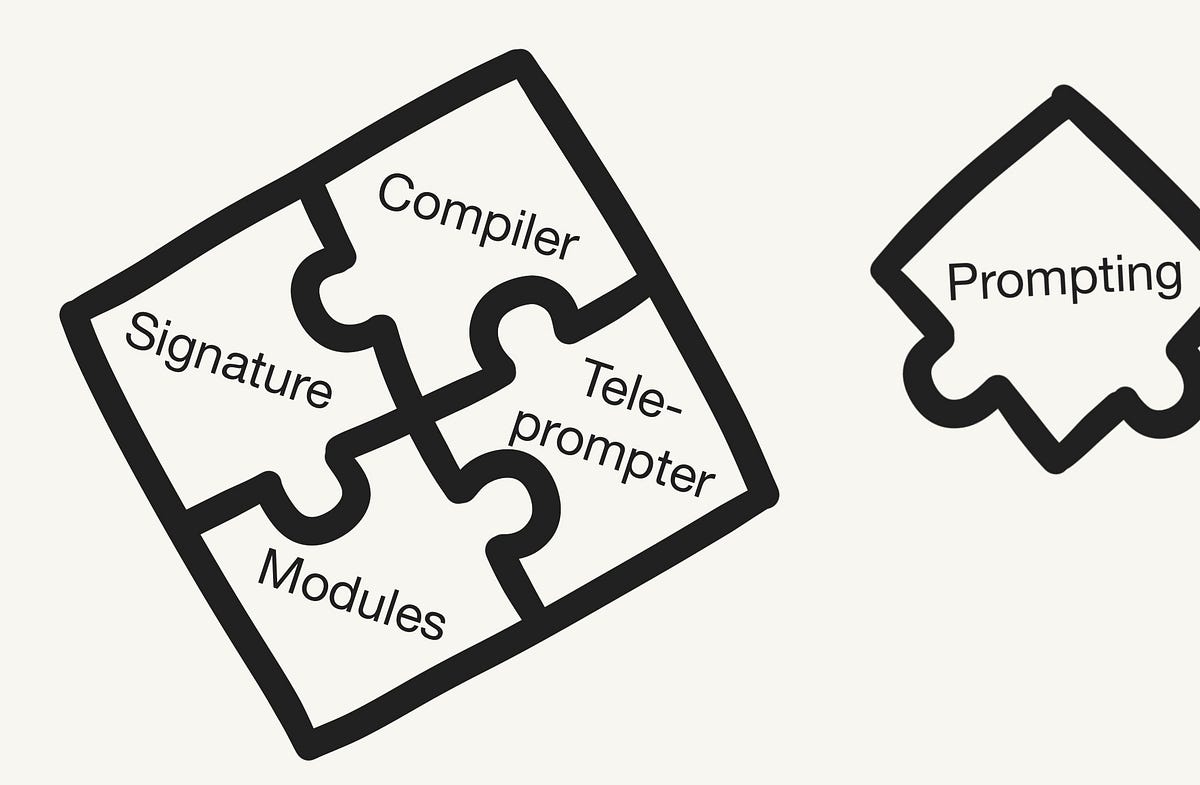

This article gives a brief introduction to the DSPy framework by covering the following topics:

DSPy (“Declarative Self-improving Language Programs (in Python)”, pronounced “dee-es-pie”) [1] is a framework for “programming with foundation models” developed by researchers at Stanford NLP. It emphasizes programming over prompting and moves building LM-based pipelines away from manipulating prompts and closer to programming. Thus, it aims to solve the…