Unlock the Power of Caching to Scale AI Solutions with LangChain Caching Comprehensive Overview

Free Friend Link — Please help to like this linkedin post

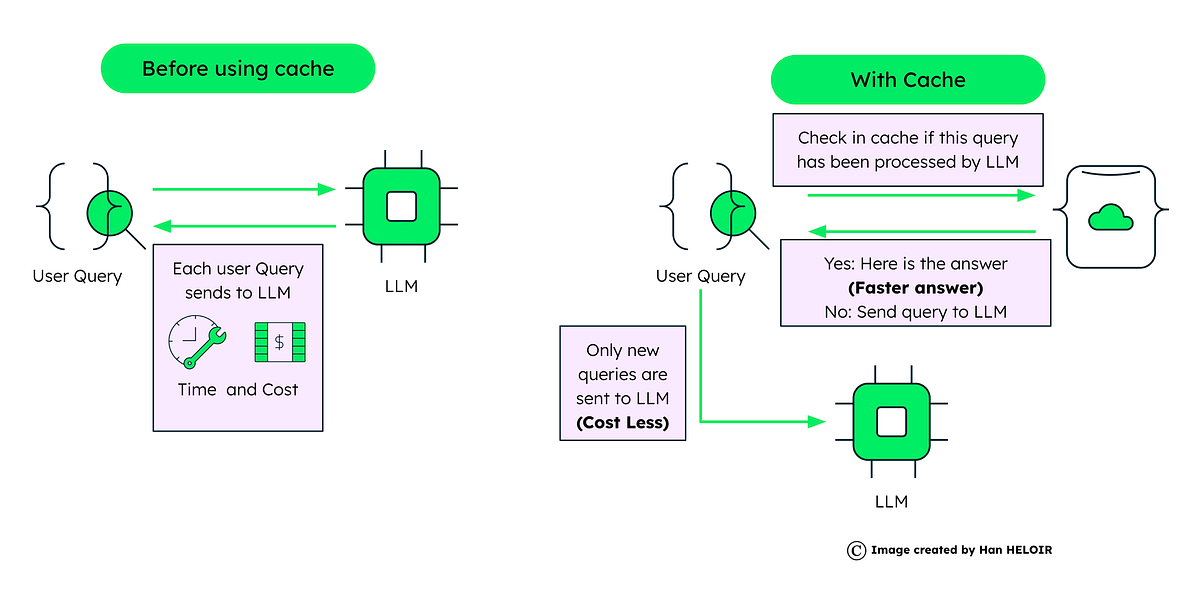

Despite the transformative potential of AI applications, approximately 70% never make it to production. The challenges? Cost, performance, security, flexibility, and maintainability. In this article, we address two critical challenges: escalating costs and the need for high performance — and reveal how caching strategy in AI is THE solution.

The Cost Challenge: When Scale Meets Expense

Running AI models, especially at scale, can be prohibitively expensive. Take, for example, the GPT-4 model, which costs $30 for processing 1M input tokens and $60 for 1M output tokens. These figures can quickly add up, making widespread adoption a financial challenge for many projects.

To put this into perspective, consider a customer service chatbot that processes an average of 50,000 user queries daily. Each query and response pair might average 50 tokens combined. In a single day, that translates to 2,500,000 tokens, up…