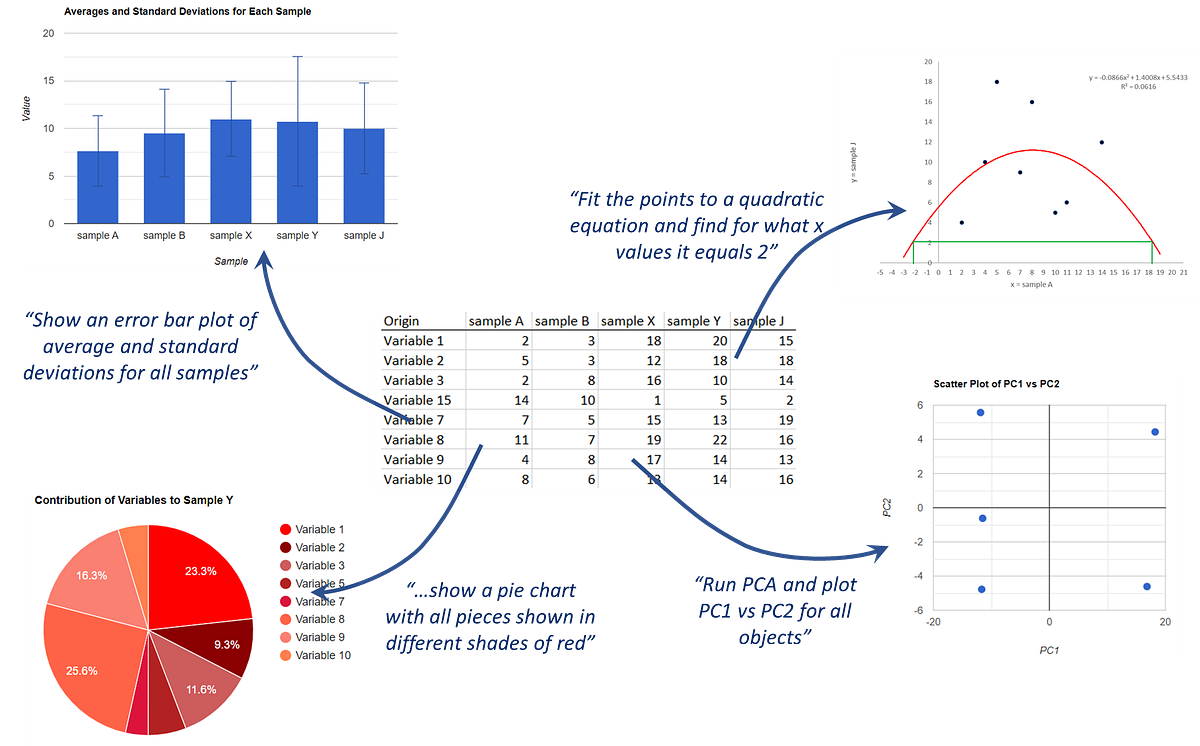

Recently I started exploring how to use large language models (LLMs) to automate data analysis such that you can ask them questions about a dataset in natural form and they would answer by generating and running code. Implemented all this as a web app, I (and you!) could try out the power and limitations of this approach, at the moment relying entirely on the program writing vanilla JavaScript:

As I explain in that article, my main interest is addressing this question:

Can I ask an LLM questions about a dataset with my own words and have it interpret these questions with the maths or scripting required to answer them?

After the several tests reported there, I pinpointed several limitations that, honestly, preclude the most interesting applications. Namely, while both GPT-3.5-turbo and GPT-4 demonstrate an understanding of user queries and can generate proper code for various data analysis tasks, challenges arise when dealing with complex mathematical operations and requests of certain complexity. And I’m not talking about very high complexity; for example, both LLMs could produce correct code to run linear regression but failed at quadratic fits, and where just totally lost when trying to implement procedures such as principal components analysis (PCA). What’s worst is that they often wouldn’t even “realize” that the task was too much for them, hallucinating code that looked OK on a quick pass (for example, the PCA procedure tried to invoke singular value decomposition, SVD) and sometimes didn’t even crash, yet was plain wrong.